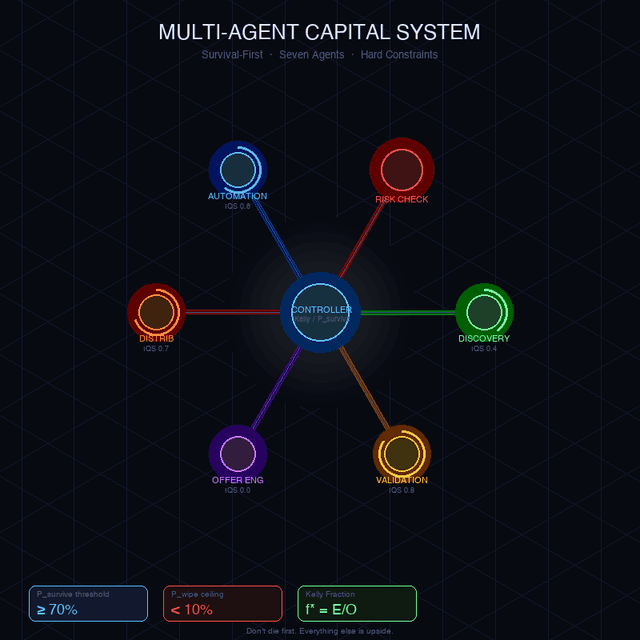

Multi-Agent Capital System

How I designed a fleet of specialised AI agents governed by hard mathematical constraints — and why "don't die" beats "grow fast" every time.

First posted: May 18 2026

Read time: 4 minutes

Written By: Steven Godson

Building a Survival-First Multi-Agent Capital System

How I designed a fleet of specialised AI agents governed by hard mathematical constraints — and why “don’t die” beats “grow fast” every time.

The Problem With Most AI Agent Systems

Most multi-agent architectures are built around capability: what can each agent do? I built mine around a different question — what can each agent spend, and what happens if it’s wrong?

When you’re deploying autonomous agents against real capital, optimism is a bug. An agent that confidently recommends scaling spend based on five data points isn’t being helpful; it’s being dangerous. I needed a system where the architecture itself enforced epistemic humility, not just the prompts.

This is the story of how I built the MCP (Multi-Agent Capital Protocol) system — a fleet of seven specialised agents governed by a single inviolable principle: survival dominates all upside considerations.

The Architecture: A Fleet With a Constitution

The system is made up of seven agents, each with a tightly scoped role, a defined capital authority, and a set of hard prohibitions. No agent operates in isolation. Every allocation decision flows through a central governance layer before it touches real capital.

Here’s how the fleet breaks down:

🔭 Opportunity Discovery Agent

The ideation engine. It generates hypotheses and scans for opportunities — but critically, it has zero capital authority. Its only job is to produce falsifiable ideas with qualitative rationale and rough demand estimates. By capping its Information Quality Score (IQS) at 0.4, I’m formally acknowledging that raw hypotheses are low-confidence by definition. An agent that can’t spend money can’t cause financial harm by being over-optimistic.

✅ Validation Agent

Before a hypothesis becomes a commitment, it gets tested. The Validation Agent runs rapid falsification experiments with a hard ceiling of £150 per test and a maximum of three tests running in parallel. I require pass/fail metrics to be defined before a test begins — not after — and results must land within 72 hours. If more than half the tests fail, the budget is automatically halved. The IQS cap only lifts to 1.0 after real transactions are on the board.

🎯 Offer Engineering Agent

Once demand is validated, the Offer Engineering Agent translates signals into concrete offers: exact pricing, sales mechanism, and positioning. Crucially, it has no capital authority at all — it cannot run ads, cannot purchase tooling, and cannot assume conversion uplift without validation data backing it up. I designed it as a pure design function, nothing more.

📣 Distribution Growth Agent

Customer acquisition and channel execution. This agent can spend, but within tight guardrails I set deliberately: a maximum of 20% of remaining capital and a daily spend cap of £50. Every proposal must specify channel, volume, and CAC assumptions upfront. If cost-per-acquisition worsens across the majority of simulation runs, spend is automatically cut by 50%. I made speculative scaling an explicit prohibition — not a guideline, a prohibition.

⚙️ Automation Ops Agent

This agent removes human bottlenecks and reduces execution cost. Any automation it proposes must break even within 14 days, and its capital draw is capped at 10% of remaining funds. I set the IQS cap conservatively at 0.6 unless the automation is already directly linked to revenue — I’m not interested in building infrastructure for a future that hasn’t arrived yet.

🛡️ Risk Reality Check Agent

My favourite part of the system. This is an adversarial governance agent with veto power over any allocation exceeding 10% of capital. It can downgrade the IQS of any other agent, flag over-optimistic assumptions, and surface legal, financial, and execution risks. It cannot allocate capital itself — its power is purely preventative. I measure its success not by revenue generated, but by capital-destructive decisions prevented.

🧮 Controller Agent

The authoritative brain of the whole system. The Controller owns and mutates the global capital state, runs all Monte Carlo simulations, computes Kelly fractions, and enforces kill switches. It has the final say on every allocation — and I deliberately made that authority non-delegable. The system invalidates itself if the capital survival probability (P_survive) drops below 70%, or if the probability of total wipe-out (P_wipe) exceeds 10%.

The Design Principles That Shaped Everything

1. Separation of Ideation From Execution

The Opportunity Discovery Agent can dream freely precisely because it cannot spend. The Validation Agent can run experiments precisely because it cannot scale. Separating the permission to imagine from the permission to act creates a natural firewall against wishful thinking propagating into capital decisions.

2. IQS as a Formal Confidence Register

The Information Quality Score isn’t a vibe check — it’s a hard gate. Every agent has an IQS ceiling that reflects how much real-world evidence backs its claims. An agent working from hypotheses alone is capped at 0.4. One that has seen real transactions can reach 1.0. This means the system automatically demands more proof before granting more authority.

3. Survival as the North Star

The Controller’s governing invariant is stark: survival dominates all upside considerations. This isn’t pessimism — it’s the mathematically correct response to operating under genuine uncertainty. Kelly criterion theory tells us that overbetting destroys compounding returns even when the underlying edge is real. The MCP system encodes this at the architectural level.

4. Adversarial Governance by Design

The Risk Reality Check Agent exists to be the voice of doubt. In most organisations, the person who raises concerns is swimming against cultural current. Here, that voice has structural veto power. Building in adversarial governance means the system gets robustly stress-tested without relying on any individual’s courage to speak up.

5. Hard Constraints Beat Soft Guidelines

Every agent contract specifies not just what an agent should do, but what it cannot do. Prohibitions are explicit: no speculative scaling, no spend without validated demand, no grandfathered allocations, no delegation of final approval. Soft guidelines get eroded under pressure. Hard prohibitions don’t.

What We Learned

The most counterintuitive lesson from building this system is that constraint is a feature, not a limitation. Agents with tightly bounded authority are faster to reason about, safer to deploy, and easier to audit when something goes wrong. The system’s conservatism in early stages (low IQS caps, small spend limits, mandatory break-even windows) is precisely what creates the evidence base needed to act more boldly later.

We also learned that versioning agent contracts matters. Each contract carries an explicit changelog — even when the version increment has no semantic changes, the discipline of versioning creates accountability and makes it possible to trace exactly when the system’s behaviour changed and why.

The MCP system is not finished. It’s designed to be iterated on as the evidence base grows, IQS ratings update, and capital state changes. That’s the point: a governance architecture that evolves with its own track record, always anchored to the one constraint that can’t be negotiated away.

Don’t die first. Everything else is upside.

This post describes the v1.1 agent contract architecture. All capital authority figures and IQS thresholds reflect current system configuration.